What is the U.S. Artificial Intelligence Safety Institute (USAISI)?

The U.S. Artificial Intelligence Safety Institute (USAISI) is an initiative by the United States federal government to address artificial intelligence (AI) safety and trust.

The Institute, which was established by the Department of Commerce through the National Institute of Standards and Technology (NIST), will focus on establishing guidelines and metrics for safe and trustworthy AI. A related consortium called the AI Safety Institute Consortium will help develop tools to measure and improve AI safety and trustworthiness.

Techopedia Explains

USAISI and its consortium are part of NIST’s response to the Biden-Harris Administration’s Executive Order on the Safe, Secure, and Trustworthy Development and Use of AI.

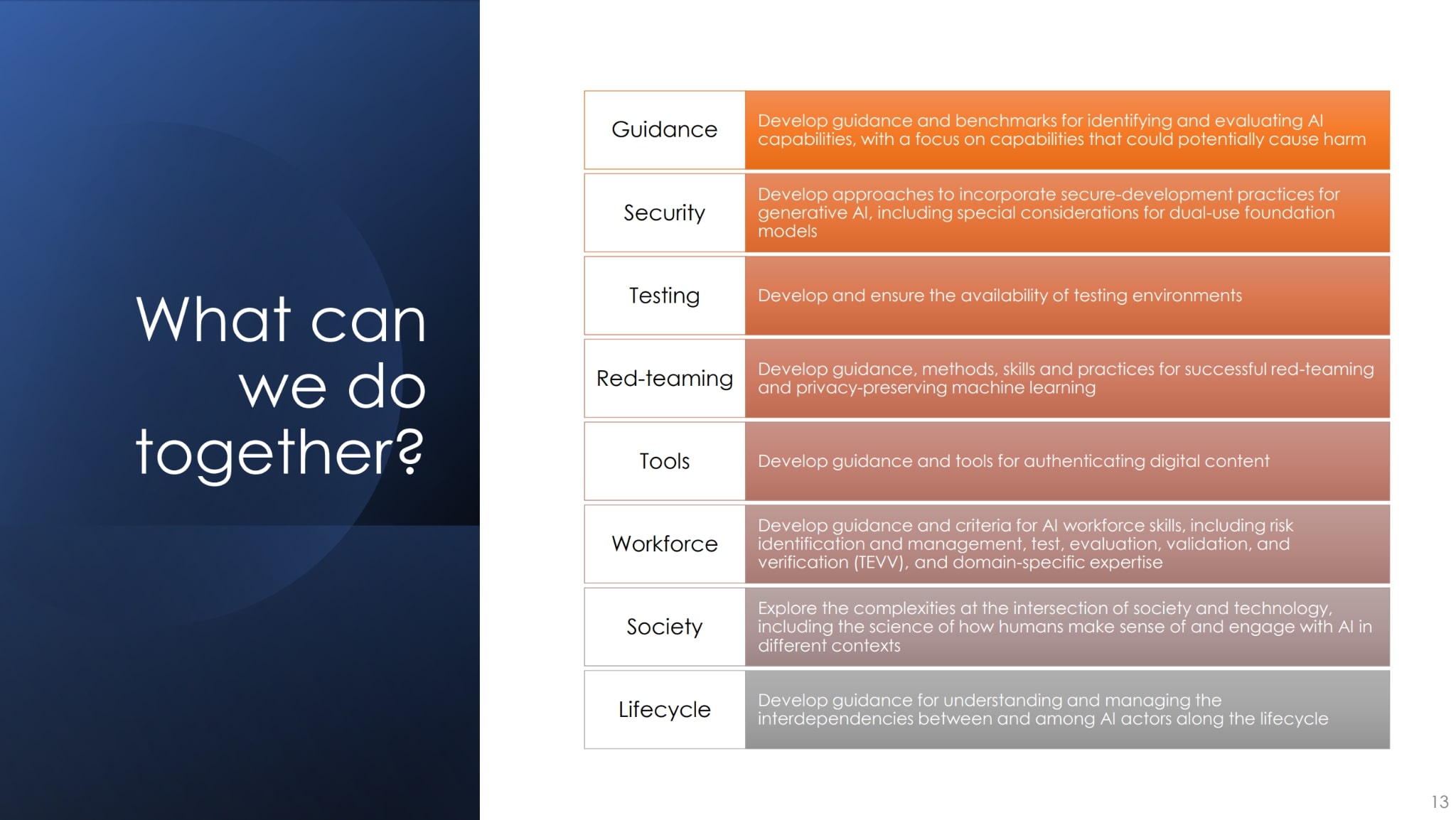

President Biden’s executive order has tasked NIST with a number of responsibilities, including:

- Developing best practices and procedures for assessing and auditing AI system capabilities. This includes setting rigorous standards for extensive red-team testing to ensure safety before public release.

- Developing a companion resource on generative AI for NIST’s AI Risk Management Framework (AI RMF).

- Developing a companion resource to NIST’s Secure Software Development Framework that will address secure development practices for generative AI and multi-modal AI foundation models.

- Launching an initiative to create guidance and benchmarks for evaluating and auditing AI capabilities in cybersecurity and biosecurity that could cause harm.

- Providing guidance on how to authenticate content created by humans and reliably identify AI-generated content (which NIST refers to as synthetic content).

- Creating test environments for AI systems.

What is the AI Safety Institute Consortium?

The AI Safety Institute Consortium will bring technology companies, other government agencies, and non-profit organizations together to identify reliable, adaptable, and compatible tools for measuring AI trustworthiness and safety.

The Consortium will be responsible for developing new guidelines, protocols, and best practices to facilitate the establishment of industry standards that can be used to develop and deploy AI in safe, secure, and trustworthy ways. This includes guidance around AI capabilities, authentication, and workforce skills.

NIST has invited organizations that are interested in participating in the consortium to submit letters of interest.

The documentation should describe the potential participant’s technical expertise and provide supporting evidence (data and documentation) that demonstrates the applicant’s experience enabling safe and trustworthy artificial intelligence (AI) systems through NIST’s AI Risk Management Framework (AI RMF).

Desirable areas of technical expertise include:

- AI System Design and Development: The ability to conceptualize, build, and implement AI systems.

- AI System Deployment: Involves the rollout and maintenance of AI systems in operational environments.

- AI Metrology: This refers to measuring and verifying AI performance, accuracy, and reliability.

- AI Governance: Refers to the policies, processes, and structures used to guide and control AI development and use.

- AI Safety: Focuses on ensuring that AI systems do not pose unintended harm and function safely under all conditions.

- Trustworthy AI: Involves building AI TRiSM systems that are reliable, ethical, and trustworthy by design.

- Responsible AI: Emphasizes the ethical development and use of AI, including considerations of societal impact and fairness.

- AI Red Teaming: Involves establishing methods to assess AI systems by attempting to exploit their weaknesses or anticipate potential failures.

- Human-AI Teaming and Interaction: This includes the study and design of systems where humans and AI work collaboratively.

- Socio-technical Methodologies: This refers to approaches that consider both social and technological needs in the design and implementation of AI systems.

- AI Fairness: Involves ensuring AI systems do not perpetuate bias, and their output operations are fair.

- AI Explainability and Interpretability: Ensures AI decision-making processes are transparent, understandable, and explainable.

- Workforce Skills: This includes developing the necessary skills in the workforce to create, manage, and interact with AI systems.

- Psychometrics: This area of scientific study – which involves measuring human intelligence, mental capacities, and processes – can be useful for understanding human-AI interactions and AI’s impact on individuals.

- Economic Analysis: This involves understanding the economic implications and impacts of AI, including cost-benefit analyses, market impacts, and economic efficiency.

Consortium members will be expected to support consortium projects and contribute facility space for consortium researchers, webinars, workshops and conferences, and online meetings.

Selected participants will be required to enter into a consortium Cooperative Research and Development Agreement (CRADA) with NIST.

CRADAs allow federal and non-federal researchers to collaborate on research and development (R&D) projects and share resources, expertise, facilities, and equipment. At NIST’s discretion, entities that are not legally permitted to enter into this type of agreement may still be allowed to participate.

The Future of USAISI

According to NIST Director Laurie E. Locascio, the U.S. AI Safety Institute Consortium will enable close collaboration among government agencies, companies, and impacted communities to help ensure that AI systems are safe and trustworthy.

Working groups for the US Artificial Intelligence Safety Institute are currently being created to support the development of responsible AI.

The standards, guidelines, best practices, and tools developed by USAISI are expected to align with international guidelines and influence future legal and regulatory frameworks for AI around the globe.

References

- Executive Order on the Safe, Secure, and Trustworthy Development and Use of Artificial Intelligence (The White House)

- Secure Software Development Framework (NIST)

- Opening Remarks by Deputy Secretary of Commerce Don Graves at U.S. AI Safety Institute Workshop (NIST)

- AI Risk Management Framework (NIST)

- NIST Seeks Collaborators for Consortium Supporting Artificial Intelligence Safety (NIST)

- A USAISI Workshop: Collaboration to Enable Safe and Trustworthy AI (NIST)