X, formerly known as Twitter, has long been considered the pulse of global news and events. However, as misleading and questionable content permeates the platform, it is now accused of sowing confusion about the Israel-Hamas conflict.

But this problem is much bigger than Elon Musk’s latest plaything, and we all have a collective responsibility before hitting the Like or Share button — or getting into an argument with a bot.

Firsthand accounts from the ground are essential for nuanced reporting on any evolving situation. Yet, primary sources are notably scarce in conflict zones like Palestine and southern Israel. This absence makes it difficult to hear the voices of everyday civilians impacted by the conflict. Such a void is quickly filled by disinformation or simplistic narratives that emotionally manipulate global audiences.

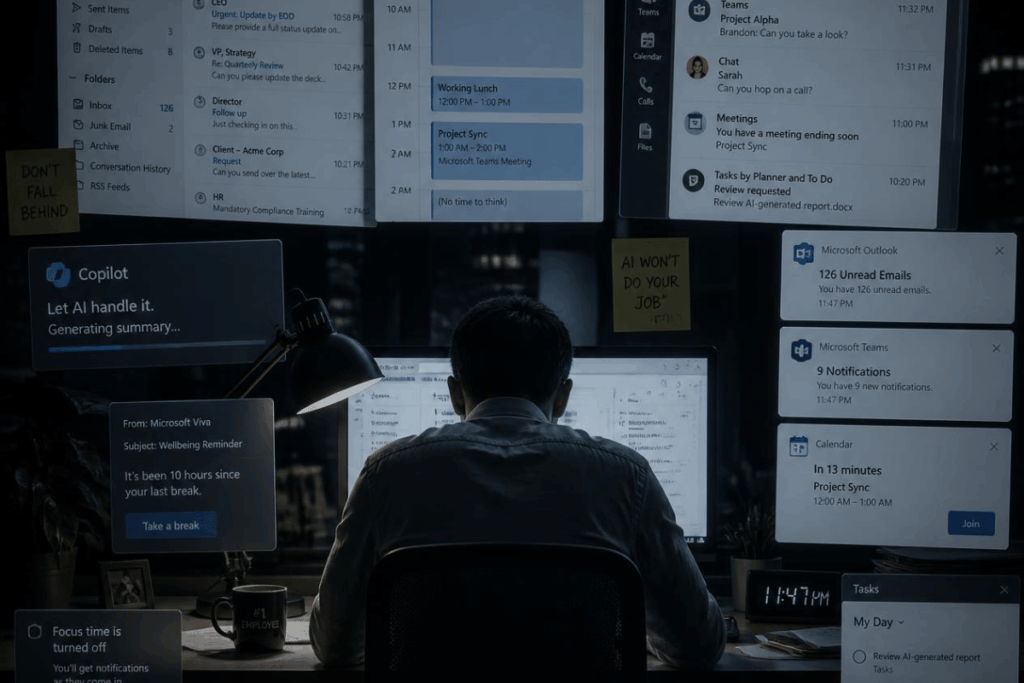

Emotion Over Facts: The Algorithmic Drivers of Polarization

Algorithms and paid subscriptions play a significant role in driving polarization and degradation of journalism. These mechanisms amplify voices from verified accounts or those with substantial followings, often overshadowing grassroots perspectives.

While those with premium subscriptions and blue checkmarks may provide valuable insights, their prominence on platforms like X can inadvertently create an echo chamber. This often sidelines local perspectives, raising questions about the platform’s algorithmic responsibility. Shouldn’t technology platforms adapt algorithms to present a more holistic view, especially in politically sensitive areas?

Social media is ripe for exploitation by entities intent on manipulating public opinion for political agendas, marketing schemes, or ideological proliferation. Because the algorithms prioritize engagement, misleading or emotionally charged narratives can often gain more traction than nuanced, factual ones, further amplifying the impact of misinformation.

Moreover, the anonymity and reach afforded by social media provide a fertile ground for astroturfing campaigns, where orchestrated efforts can create a façade of grassroots support or opposition for a particular issue. Bot accounts, deepfakes, and other forms of artificial engagement can produce a skewed perception of public sentiment, influencing real individuals to rally behind or oppose a cause based on false premises.

But as users, how can we better separate fact from fiction?

How to Identify if You Are Being Manipulated

Social media platforms have become extraordinarily potent channels for shaping public opinion, and this capability has its darker implications. These platforms can feed users a tailored worldview that aligns with their pre-existing beliefs and biases through targeted advertisements, echo chambers, and algorithmic personalization. Using emotionally charged content, often leaning on sensationalism, can stir emotions, reinforcing those biases and potentially eclipsing objective facts.

The skills required to recognize the emotional triggers in phishing attempts are remarkably similar to those needed for identifying fake news and disinformation online. Just as phishing relies on emotional manipulation to bypass our rational faculties, disinformation also aims to tap into our primal feelings — fear, anger, or a sense of injustice to elicit a quick, uncritical response. This emotional arousal can close our eyes to logical inconsistencies, source credibility, and other red flags that generally invoke skepticism. Therefore, we must extend the same vigilance to our broader online engagement.

As consumers of digital information, we need to cultivate emotional intelligence that allows us to pause and critically assess the information we encounter, mainly when that information triggers strong emotional reactions. This form of mindful consumption is especially critical in today’s polarized and complex information landscape, where emotionally charged fake news can have real-world consequences.

Best Practices to Filter Through Misinformation

In a world inundated with data and information, the ability to sift through the noise and discern factual from misleading content is crucial. One of the cardinal rules to navigate this complex landscape is to cultivate a habit of skepticism. Before sharing, endorsing, or even absorbing the content, ask yourself questions like, “Who authored this?”, “What are their credentials?” and “Is the source reputable?”

These simple measures are highly beneficial when encountering news on social media platforms, where misinformation can spread virally. Additionally, it’s advisable to look for journalistic elements like credible citations, direct quotes from involved parties, and confirmations from multiple sources.

Thankfully, individuals can employ various actionable steps to dodge the misinformation bullet. Consider leveraging the power of fact-checking organizations like Snopes, FactCheck.org, or more localized services within your jurisdiction. Such organizations are dedicated to verifying information and can robustly supplement your scrutiny.

Similarly, tools like Feedly and Pocket can curate trusted sources, acting as your initial filter. Social media platforms like Twitter and Meta’s properties (Facebook and Instagram) have also taken steps to label or flag misleading or unverified content.

Question Everything

However, the effectiveness and impartiality of these measures remain debated. While these platforms attempt to police content, the onus still largely falls on individual users to practice vigilant and informed content consumption.

Simple cues, like poor grammar or emotional language, can often serve as red flags. Furthermore, the validity of a web address or source often provides preliminary clues about the credibility of the content. However, it’s essential to understand that misinformation is increasingly sophisticated, sometimes bypassing these more apparent markers.

The speed at which news travels on social media has seen even respected mainstream media outlets falling into the trap of rushing to be the first to break a story. Phrases like “the BBC understands” or “unconfirmed reports” are becoming increasingly common as news organizations strive for immediacy over in-depth verification. This urgency often sacrifices the meticulous fact-checking once the cornerstone of journalistic integrity.

Combining individual discernment, technological tools, and feedback from credible institutions will help you unlock a composite strategy that provides the best defense in the fight against misinformation. This is not just a personal safeguard but a societal necessity, considering misinformation’s impact on communities and democratic processes.

The Bottom Line

Big tech must consider the ethical implications of promoting paid or verified content when unbiased, on-the-ground reporting is indispensable. This leads to broader concerns about balancing profitability with responsible journalism, ensuring algorithmic fairness, and advocating equitable representation in our digital ecosystem.

The credibility of information, whether sourced from an independent blog or a well-established news organization, should always be rigorously questioned. Users should be aware that the immediacy that digital platforms afford can compromise even the most reputable sources, thereby emphasizing the critical need for individuals to employ multi-faceted approaches to verify information.

Adopting a culture of questioning and cross-referencing, even when consuming content from traditionally reliable outlets, is becoming essential in our rapidly evolving information landscape. Better still, activate Monk Mode on your devices and enjoy a conversation offline with someone who will challenge your worldview and set you free from your echo chamber.