Techopedia interviews cybersecurity expert and former US Department of Defense cyber training developer John Hammond to discuss the fine line between innovation and security in software development.

With the SEC putting strict guidelines around reporting cyber attacks and security breaches, we also explore how the security community can work together against threat actors and what transparency brings to the digital world.

According to Hammond, “It means we are playing together — it’s a team sport, and when we’re all playing in harmony, it’s a good thing.”

We also explore how we can address security threats in the age of AI.

About John Hammond

John Hammond is a cybersecurity researcher, educator, and content creator. As a Department of Defense Cyber Training Academy instructor, he taught the Cyber Threat Emulation course, educating both civilian and military members on offensive Python, PowerShell, and other scripting languages, and the adversarial mindset.

As part of the Threat Operations team at Huntress, John spends his days making hackers earn their access and helping tell the story. He has developed training material and information security challenges for events such as PicoCTF and competitions at DEFCON US. He is an online YouTube personality showcasing programming tutorials, CTF video walkthroughs and other cyber security content.

Key Takeaways

- New SEC rules requiring companies to report cybersecurity breaches will help businesses cooperate while addressing threats.

- Remote cloud-based working creates security vulnerabilities from multiple family members using the same devices and browsers.

- Recent breaches have not used malware — attackers are taking advantage of software integrations or connections to gain access.

- AI has several applications in cybersecurity — analyzing network traffic, communications, and behavior to detect anomalies in traffic or files, aiding awareness of what threats look like, and assisting in writing software or code to identify potential vulnerabilities.

- There is a “spicy danger zone” between research innovation and security, and guardrails will need to be put in place to secure applications in the real world.

The SEC’s New Cybersecurity Reporting Requirements

Q: The US Securities and Exchange Commission’s new cybersecurity reporting requirements for business have recently come into effect. What does this mean for businesses who now have to comply?

A: When a company or business organization is hit with a cyberattack, some intrusion, or breach, they now have four days to report it to the Securities and Exchange Commission (SEC), which is quite a turnaround.

If companies just need to report that they see something nefarious and they’re digging into it, that’s reasonable within a four-day time frame.

But a full, thorough investigation of incident response and trying to make sure they’re back in action and dealing with backups and their business continuity plan — that could take weeks or months, certainly more than four days.

But tightening the belt with some more strict or fast-paced rules and regulations will mean they will be much more transparent.

It may even take away the stigma of another company having some hack, incident, or breach, and we can be the adults in the room who realize we have these faults in cybersecurity.

But it means we’re just going to get better because now the community can rally behind and help with threat intelligence.

That will certainly mean some talk from either cyber insurance companies or vendors or folks trying to jump in and help.

It means we are playing together — it’s a team sport and when we’re all playing in harmony it’s a good thing.

The Cybersecurity Threats and Attacks of 2024

Q: What are some of the most common types of attacks and threats that are really driving the change in the landscape?

A: For the longest time, we were focused on the endpoints and on the computer, on the device, software—malware threats, phishing emails, etc.

Those are still there — you’ll see the usual social engineering, whether that’s the MGM casino hack tricking folks over the phone or some info stealers getting some more access, ransomware is still absolutely at play.

Following a lot of the more recent big headlines, we were tracking the Moveit transfer exploitation, the GoAnywhere Managed File Transfer zero-day attack, and some of the other intrusions from the ransomware gangs.

There are interesting trends there where they don’t really encrypt the file system anymore, they just steal information and use that for leverage in blackmail and extortion. We see ransomware and those endpoint things a lot.

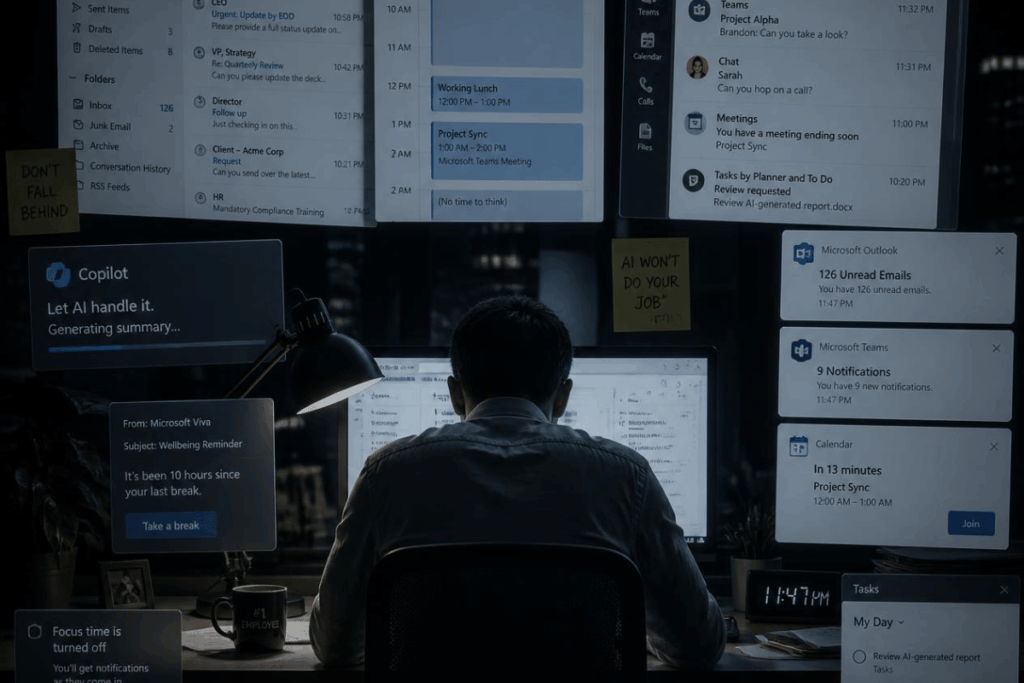

A lot of businesses will move to the cloud, the big amorphous buzzword, and all the online applications and services like email or files that move back and forth with things like Sharepoint or Microsoft Teams.

If you’re chatting with co-workers or setting meetings on a calendar, that is all web-based in the cloud, so there’s even more emphasis on business email compromise and account takeover that will go after a person’s identity.

Attackers are not strictly after a computer or an endpoint anymore, but the person. We’ve especially seen that in a lot of the recent breaches where they don’t use malware, they’re not writing any lead code or exploits, they’re just taking advantage of the integrations or connections we’ve bundled into one place.

Q: How should that shape cybersecurity priorities for organizations, whether small or large?

A: The basic bare-bone cybersecurity hygiene that we all keep screaming and shouting from the rooftops still needs to be said. The reason we keep saying it is because it’s the right answer.

We can get a little bit more tactical on that, though, because beyond patching your systems, using antivirus software and a password manager, and advice like “don’t plug in USB drives or click email links”, and so on, there are some extra nuanced things that I would like to bring to the forefront.

For information stealing and malware attacks that can open the door for social engineering, one thing that is especially pertinent in this post-pandemic world is everyone’s working from home.

Remote working professionals might have a studio or home office, but it’s possible they’re with family, and if it’s a shared device or if everyone uses the family computer, there could be a good mix of corporate credentials on there to log into the office VPN or to get into their official infrastructure environment.

And then the kids who might be doing their homework or trying to play video games and they install some cheats or hacks, that could come with malware.

That’s worth harping on — it might be there’s an accidental open weakness and vulnerability. So it’s important not to share devices with kids or save information in a browser.

AI and the Military

Q: How do you see the collaboration between OpenAI and the Pentagon, especially given the current cybersecurity and geopolitical environment?

A: It is an understandable and necessary thing; we could have seen this coming.

The United States and the whole world is getting pretty good at artificial intelligence, and we’re still figuring it out.

It’s not some magic silver bullet yet, but it would make sense for the military or governments to want to keep that close.

They’re still very strict and won’t assist in weapons, and the production or development and use of artificial intelligence for weaponry.

But maybe you can streamline a lot of monotonous work, whether it’s regulating where soldiers or arms go or writing reports. It makes complete sense that we would put that where it could best be used.

I’m not so alarmist to think we’re in the Terminator era where the robot or the AI will be the one pulling the trigger.

When we put it in the frame of cybersecurity, it is interesting either on the offense — threat actors, hackers, and adversaries — or defense.

From the offensive perspective, AI can’t find or just come up with a new zero-day exploit because it doesn’t have the data and the training.

If we, as the user, give it enough puzzle pieces — we say: “Here’s disassembly, here’s decompiled variables and technical witchcraft”, it can follow along and help the human, but then it’s still doing what the human would need to do to begin with.

I will note, though, if you can get a little bit tactical in what you ask it, the prompt engineering to create ransomware and other attacks is what folks could probably dig into.

AI on the Offense and the Defense

Q: So, what do companies need to be aware of when they think about how AI will play this role in hacks and other threats?

A: It’s a matter of knowing what is out there. Education and awareness can only go so far, but it goes pretty far. Being extra vigilant and cautious of phishing emails is sometimes a crutch, but it is especially valuable.

Foster a culture where you’re willing to ask, or you’re willing to say, ‘I just got this really weird email’ or ‘I see something strange on the website’, or ‘Something is weird on my computer, can I get another set of eyes on it?’, that is a good thing.

We need to build and establish a culture where we get security right. We can clearly see when we get security wrong.

Q: What is the potential for the use of AI in cybersecurity training — is there a move towards that?

A: AI is good at understanding data, especially a vast array, that’s exactly the point.

So something that will be especially useful for artificial intelligence is we have so much network traffic, communications and behavior.

When something happens, a company, organization, or business can feed that into AI, and that will be smarter and better at building the baseline of what’s normal and what’s natural for what happens, so it could then detect the anomalies of odd traffic or discrepancies in file locations.

AI can also aid awareness of different permutations of what threats look like — if you ask ChatGPT to build a phishing email, whether you’re a threat actor or using it to develop knowledge, it can do that.

Another possibility is writing software or building code. We saw the Moveit transfer exploitation happen because it was a product with 10, 15-year-old code and had technical debt.

With AI in the mix, as you’re writing and developing software, it could provide that extra look to say there could be a SQL injection vulnerability, or incompatible function, or there’s a better practice we can implement, and that would be valuable.

Even while training, it could AI could simulate implementing a policy to make sure that it has the appropriate configuration settings.

Q: Where is the balance between encouraging AI innovation and maintaining cybersecurity?

A: There is maybe a spicy danger zone. For most of the world, for even our industry, security comes second — we want to build a business, and we want to be a financially healthy company one way or another.

So we build stuff, we’ll make a product, do the sales and marketing, and then security will be an afterthought.

When it comes to further progress and innovation in research and development, we’ll push hard on that, and we’ll run fast, and we’ll break stuff, and we’ll make mistakes because that’s what people will naturally want to do first.

And then when we see it in action in the wild, that’s when we need to put these walls around it and add the guardrails and get security wrapped around it.

From the security professional looking in, that’s a worrisome thought, but that’s just the way that it is.

We should be cautious, still innovate, have fun, do new stuff with reckless abandon — but we should keep that check in the back of our minds.